The Line Between AI-First and AI Theater

Letting product ownership be your guide

You have probably called yourself an AI-first product manager lately.

You use AI for discovery, market research, and prototypes. It feels current, capable, and forward-looking.

As you move closer to building with AI, the definition becomes harder to hold.

Somewhere between experimentation and production, AI-first starts to blur. Building accelerates. Responsibility waits.

You can build an impressive AI demo that works, looks right, and moves fast. You can also realize it cannot be shipped.

That is where the line between AI-first and AI theater appears.

The issue is not demo quality. It is outcome alignment. A demo shows a future. A product commits to one.

A product team once presented a polished dashboard for a new feature. Stakeholders liked it. Engineering asked how the data would be governed across regions. No one owned the answer. The demo lived on. The feature did not.

What AI-first usually means at first

AI-first begins as thinking support.

You explore scenarios.

You test assumptions.

You build demos.

You show what might be possible.

AI sits at the edges of product work and sharpens judgment. Prioritization improves. Tradeoffs feel clearer. Patterns emerge faster.

Product work feels lighter.

A PRD draft that once took days now appears in an hour. The reasoning sounds confident. The language is clean. The risk is subtle. Speed arrives before certainty.

What that definition quietly misses

Success in AI-assisted thinking naturally leads to product experiments with AI.

The step from “AI helps me think” to “AI touches the product” feels small.

Once AI touches the product, even a simple experiment carries consequences.

Common patterns appear:

A capacity mock-up blocked by security and accessibility

A demo UI that requires re-architecture to integrate

A chatbot with no retraining path

A recommendation engine with no failure behavior

A prototype that exposes intellectual property

The experiment earns strong feedback.

It still cannot move forward.

Because no one owns productization.

A builder PM once intercepted customer telemetry to predict capacity exhaustion. The model worked. The charts looked right. Legal asked where consent was recorded. The experiment ended that day.

An AI experiment does not need to promise impact. It does need to choose what kind of impact it is testing.

Where product principles fade

AI-first work moves fast.

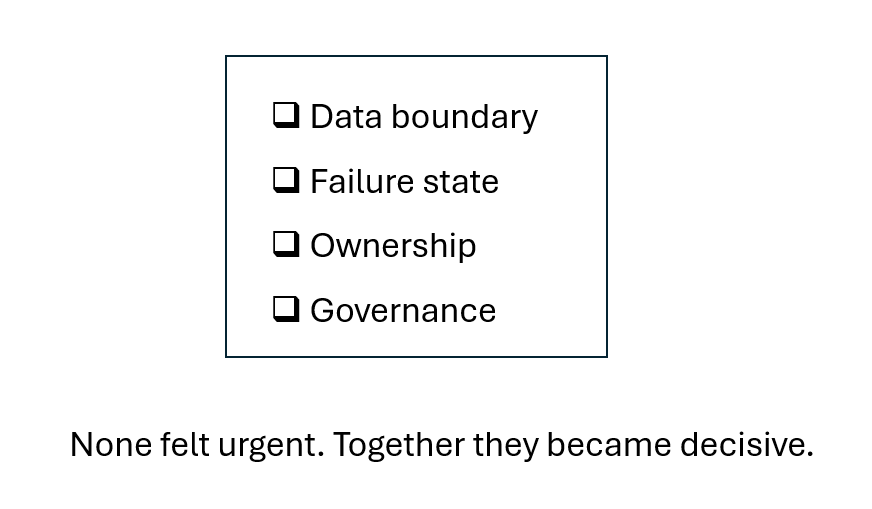

Fast work makes decisions feel temporary:

Should the prototype touch real data?

Is there a fallback state?

Are corrections logged?

Is this a demo or a system?

Each decision seems small.

Together, they decide whether something is becoming a product.

Context feels editable. Governance feels early. Explainability feels deferrable.

Then the experiment reaches customers.

A workload placement tool once ranked options using a cloud recommendation service. Customers later learned their workload characteristics left their environment. The feature met its technical goal. It failed its trust test.

Product principles rarely break in one moment. They fade through convenience.

The line you eventually draw

As an AI demo gains traction, two forces appear.

You are asked to expand it because people want more.

You are asked to restrain it because rules were skipped.

That tension is where AI-first becomes AI theater.

Product ownership has not caught up. The demo has.

A useful test helps:

If this AI feature worked perfectly, what human responsibility would disappear?

If nothing changes, the work is still theatrical.

A feature UI once impressed sales teams. It removed no operational burden. It changed no workflow. It remained a story about a future that never arrived.

What productive AI-first feels like

Productive AI-first feels different.

Heavier in some places. Clearer in others.

You still experiment.

You experiment toward an outcome.

Instead of asking, “Can AI do this?”

You ask, “What changes if AI does this?”

Instead of building around possibility,

You build around responsibility.

You begin with a workflow.

You define what should improve.

You let AI assist inside those boundaries.

Customers once complained that capacity warnings arrived too late to act. A builder PM generated a prediction graph inside the product development environment using production data access and security rules. Engineering tested it. Customers reacted early. The workflow improved before the model did.

Examples start to look like:

Capacity agents that notify before failure

Usage graphs grounded in internal data

Support assistance with human override

Recommendations designed for error

UI prototypes built in internal environments

The difference is in what the feature assumes.

A modern interface that works only when a cloud connection is available is a design outcome.

A basic interface that works inside the product is a product outcome.

With the outcome anchoring the change, AI moves into the background.

What this teaches about product ownership

AI-first does not change what product managers are responsible for.

It makes those responsibilities visible.

AI experiments that touch the product still follow product processes. They still need explainability, governance, and ownership.

Builder PMs keep building. They switch hats after the outcome is clear.

This is how experimentation survives contact with reality.

A recommendation engine once gained attention in demos. It gained impact only after it was embedded into a product workflow and tied to a measurable decision.

Closing

Product ownership is your guide.

Experimentation remains essential to AI-first product management.

Experiment as if the result might matter.

When experiments carry product outcomes, AI-first stays productive, responsible, and real.

If you want to go deeper, a companion guide for paid subscribers turns these ideas into concrete product habits. The companion guide has 6 examples of AI work using product principles. It provides product practices you can do now to match outcomes to your AI experiments.

Looking for more practical tips to develop your product management skills?

Product Manager Resources from Product Management IRL

Product Management FAQ Answers to frequently asked product management questions

Premium Product Manager Resources (paid only) 4 learning paths, 6 product management templates and 7 quick starts. New items monthly!

TLDR Product listed Product Management IRL articles recently! This biweekly email provides a consolidated list of recent product management articles.

Connect with Amy on LinkedIn, Threads, Instagram, and Bluesky for product management insights daily.

The "AI theater" concept is painfully accurate. I see it constantly - teams bolting a chatbot onto their product and calling it AI-first.

The test I use: does the AI make decisions that affect outcomes, or does it just present options? If every output still requires a human to review, approve, and execute - that's theater.

In my own setup, the shift from theater to real happened when I stopped reviewing every agent output and started reviewing outcomes instead. Let the agent decide how, measure what it produces. Huge difference in what becomes possible once you make that leap.

I agree with this so much. That's why I've been teaching my AI product management classes about the need for contracts and constitutions being put in place before a line of code is vibed so the agentic system slinging the software into existence has some boundaries. That's why I also teach product managers to take a step back and look at both internal and external risks in just the areas you'd identified and then some. I know yeah, have some conversations with legal and finance before you seek sponsorship from the CPO and CFO. it'll save you from getting vibe fired.