Why AI Initiatives Break Normal Product Manager Instincts

AI changes product work faster than most organizations realize

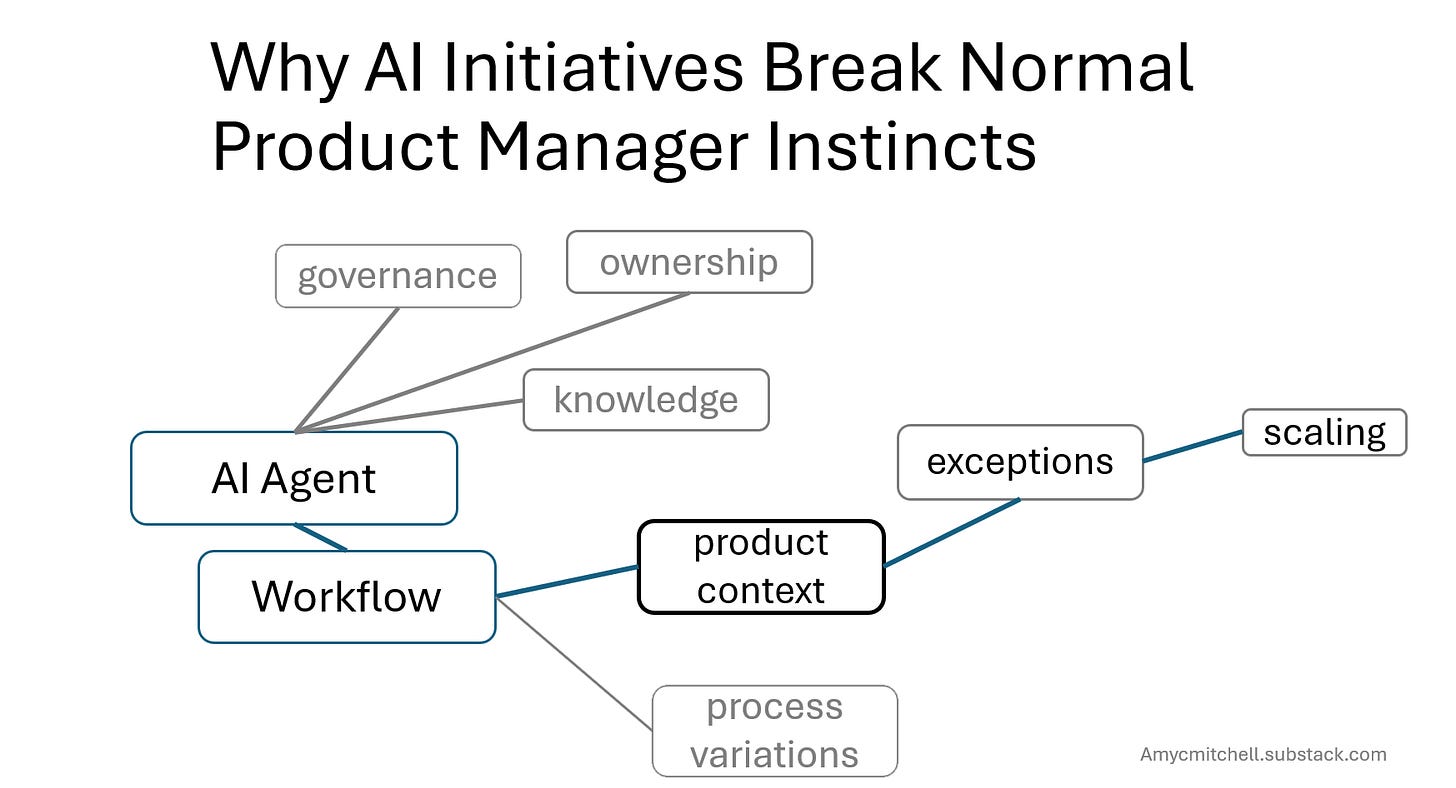

An AI initiative starts simply enough. Add an AI agent to a time-consuming product management process.

At first, the conversation sounds like normal product work:

Requirements,

Capabilities,

Integration questions,

User experience.

Then the initiative starts expanding.

The workflow is inconsistent across teams.

Documentation is stale.

Ownership is unclear.

Exceptions are handled differently across products.

Within a few meetings, the discussion is no longer about the AI initiative.

It becomes:

Process redesign,

Governance,

Knowledge quality,

Operational consistency,

And organizational risk.

This is the moment product managers feel the urge to stabilize the system before moving further.

Those instincts are usually correct. But this is what makes AI initiatives difficult.

AI initiatives look like normal product work until you see AI needs more than normal product work.

What looks like a normal product initiative quickly expands into operational and organizational redesign.

Normal features don’t change the workflow, operate dynamically or need ongoing evals to keep it tame.

Adding AI to anything that touches the product pulls you into disruption. The closest pattern to tackling AI is transformation. The main difference is that the AI change doesn’t come with the transformation label.

In the usual stable product environment, you drive for clarity and risk management as a product manager.

In transformation environments, you need high velocity learning.

This changes how product managers operate.

The familiar AI initiative that suddenly expands

You finally have a real opportunity to use an AI agent. Leaders are investing in it before you suggest an approach.

You decide PRDs are a high-leverage place to use an AI agent. Mistakes in a PRD have many downstream impacts and product managers never seem to get enough time to do the PRD right.

Quickly you find:

Workflow differences

Multiple owners of PRD contents

Program managers and engineering teams have little trust in PRDs

Non-existent governance of PRD contents

Scope explosion

This is where your product manager instincts kick in.

Why your product manager's instincts slow the initiative

Going into normal product management mode, you look for stable and known items to build up. But every product does requirements differently.

Continuing product manager thinking leads to more concerns:

If the PRDs and requirements workflow are so different, then how can you reuse the agent.

If engineering already doesn’t trust PRDs, their trust could break more with a mistake from AI

Scaling starts looking like a custom agent for every product

These are huge caution signs for a normal product initiative.

AI initiatives become transformation work early

AI systems interact with workflows dynamically instead of deterministically.

That means organizational inconsistency becomes visible much earlier than with traditional feature work.

Your instinct for a stable environment is technically right. But setting up stability for a dynamic AI agent that contributes to a PRD will hold up the benefit of the initiative.

On the other hand, if you rush into the AI-assistance without context and guardrails, you could lose trust and credibility.

This is a situation where traditional product management techniques delay progress. Transformation leadership can enable progress.

Instead of going into systems thinking and stabilization strategies, you drive for learning.

How does learning make progress? Your systems thinking shows plenty of problems to solve. But you and your team haven’t learned enough about a system with AI to understand the problems.

Transformation product management focuses on enabling the next step. Stabilization product management focuses on optimizing several steps ahead.

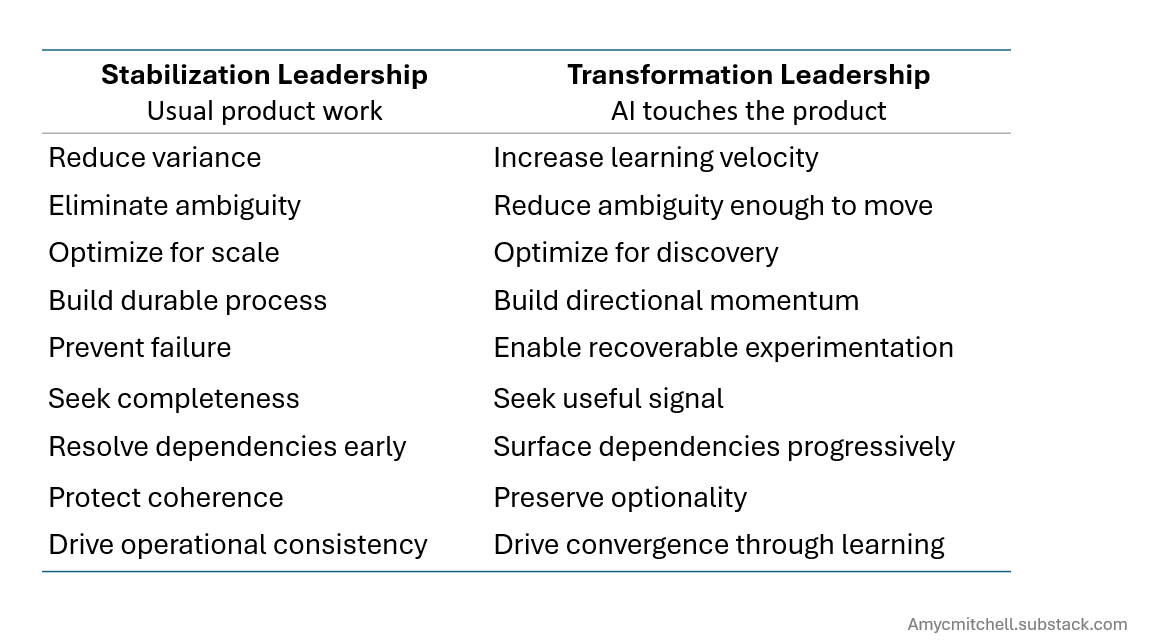

Here is a comparison of these two approaches to product work:

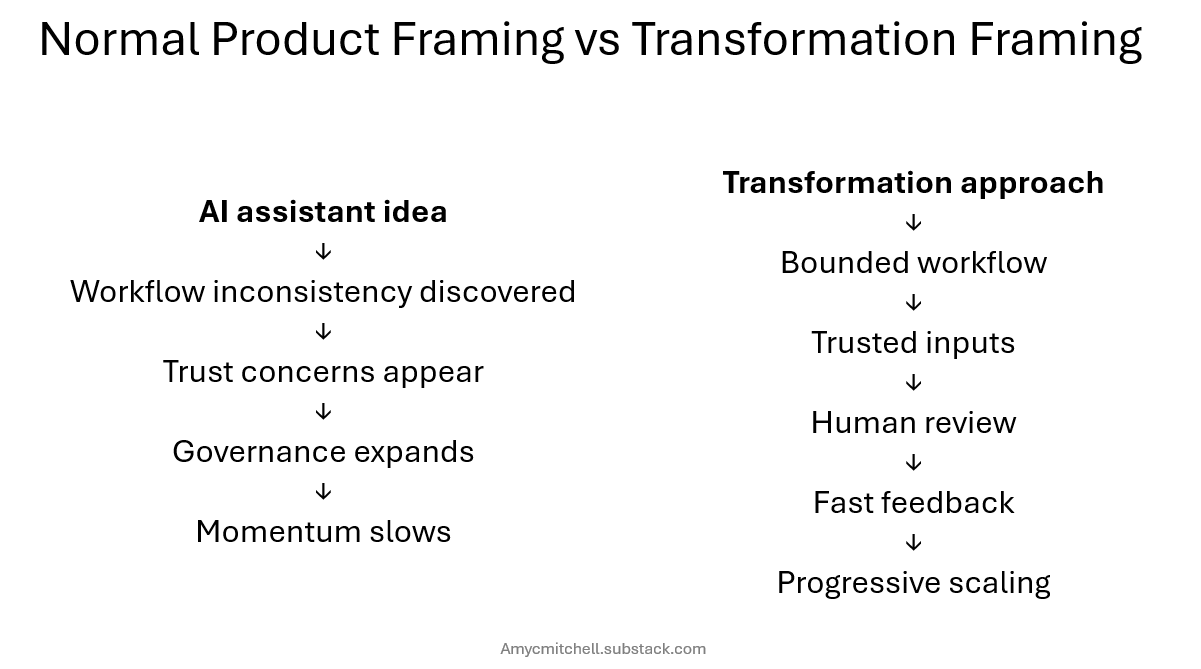

The trap: solving the whole system too early

Early AI transformation conversations often create a trap for product managers and systems thinkers.

You can see where the initiative will eventually struggle:

trust in generated outputs,

inconsistent process inputs,

operational gaps,

unclear ownership,

stakeholder concerns,

scaling limits.

So the natural response is to improve the system before taking AI into the workflow.

That works well in stable environments.

It works less well during the earliest transformation stages because the organization is usually trying to answer a different question first:

“Can we create useful learning quickly enough to justify continued movement?”

Learning enough to start is a different goal from getting to a stable environment.

But transformation is new territory. You need to learn enough for the next step. You don’t know what works yet. Your focus is learning.

Strong transformation leaders understand that introducing every important concern at once can accidentally overwhelm the organization’s ability to move.

This is because the organization has not yet built enough shared context to absorb the changes.

Using transformation thinking, the AI agent idea is about:

Learning enough

For controlled risk

In a bounded pilot

Shaping without slowing

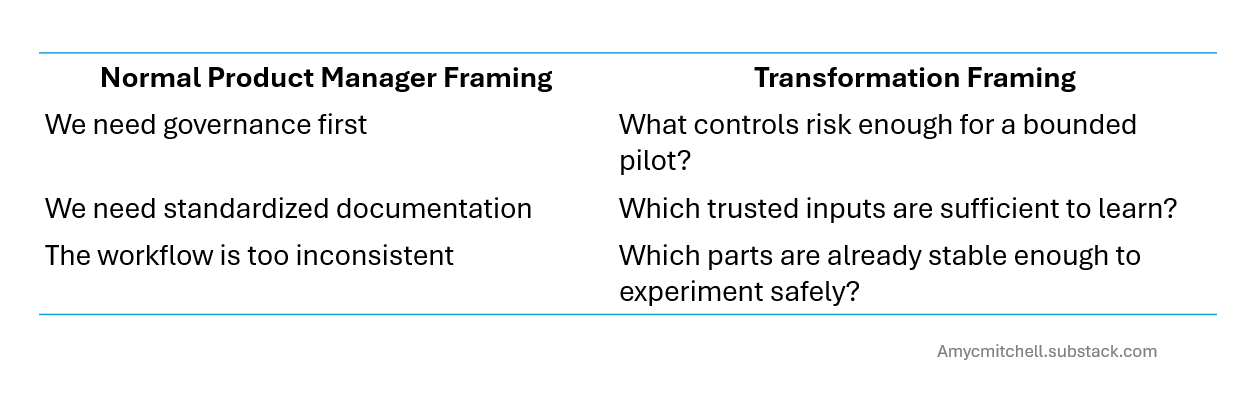

Strong transformation product managers shape the system early just like product thinkers.

They just shape it differently.

Instead of trying to fully stabilize uncertainty, they:

narrow the scope,

reduce the exposure surface,

constrain the workflow,

and create bounded learning environments.

Instead of:

“We need enterprise knowledge quality solved first.”

The framing becomes:

“Let’s start with one trusted workflow and learn from that.”

Instead of:

“The process is too inconsistent for AI.”

The framing becomes:

“Which parts of the workflow are stable enough to support useful experimentation?”

This is sequencing complexity.

You still apply systems thinking at the right altitude for the organization’s current learning phase.

That often means:

smaller experiments,

narrower context,

faster feedback loops,

and less pressure to solve future scaling problems immediately.

Because early transformation phases are about generating believable learning.

The leadership transition

Until AI touches your product, you are rewarded for stabilization leadership:

Seeing risk early

Improving operational coherence

Designing scalable systems

AI transformation changes the sequencing of your instincts.

The focus is on learning and less on rigor. The learning focus includes:

Where workflows are stable

Which inputs matter the most

What level of human review is needed

This is one of the hardest transitions for product managers building with AI.

What transformation thinking product managers do early

When AI enters a workflow, transformation-minded PMs often:

choose one narrow workflow instead of redesigning the whole system,

identify trusted inputs instead of solving enterprise knowledge quality,

add human review instead of demanding full automation reliability,

measure learning velocity instead of scale efficiency,

and delay organization-wide standardization until patterns emerge.

The goal is to apply rigor in the sequence that the organization can absorb.

Transformation thinking is not permanent.

As learning stabilizes, workflows repeat, and trust increases, stabilization leadership becomes increasingly important again.

The mistake is introducing scale-stage rigor before the organization has enough learning to support it productively.

Conclusion - AI touching your product needs transformation thinking

AI initiatives often look like normal product work at the beginning.

But once AI starts interacting with real workflows, organizational inconsistency becomes part of the product problem itself.

That is why product manager instincts can suddenly create friction. Those instincts are optimized for a different phase of organizational maturity.

Transformation leadership is deciding:

which rigor is necessary for learning now,

and which rigor belongs later as confidence grows.

The strongest product managers in AI transformation are the ones who sequence systems thinking to preserve momentum while the organization learns.

That is the leadership shift.

Q&A

How do I get product team engagement on an AI transformation project?

Initially, work with a small team to learn how AI changes your product workflow. Usually, the product team gets involved before scaling to customers.

Won’t the AI transformation get paused to unify the product data and workflows?

No. You control the inputs to AI. A small set of inputs tuned for the product workflow is usually enough to make progress.

With senior leaders focused on the AI transformation, how can I just experiment with a fraction of the product?

Targeting a part of the workflow that is time-consuming can show a business impact. Dependency checking, for example, can be offloaded and free you up for business growth. By narrowing your focus, you have results to show quicker.

How do I know when to move from experimentation to stabilization?

When the changed workflows become repeatable and usage is increasing. At this point, governance, standardization and scaling start adding value.

How small should a bounded AI pilot be?

Small enough to learn something with controlled risk. Large enough for a person to see a result in weeks/days.

Looking for more practical tips to develop your product management skills?

Product Manager Resources from Product Management IRL

Product Management FAQ Answers to frequently asked product management questions

Premium Product Manager Resources (paid only) 4 learning paths, 6 product management templates and 7 quick starts. New items monthly!

TLDR Product featured Product Management IRL articles recently! This biweekly email provides a consolidated list of recent product management articles.

drpp The Drip featured Product Management IRL articles. This newsletter has handpicked product management articles every day, summarized to give you the big picture of product management and tech.

Connect with Amy on LinkedIn, Threads, Instagram, and Bluesky for product management insights daily.